|

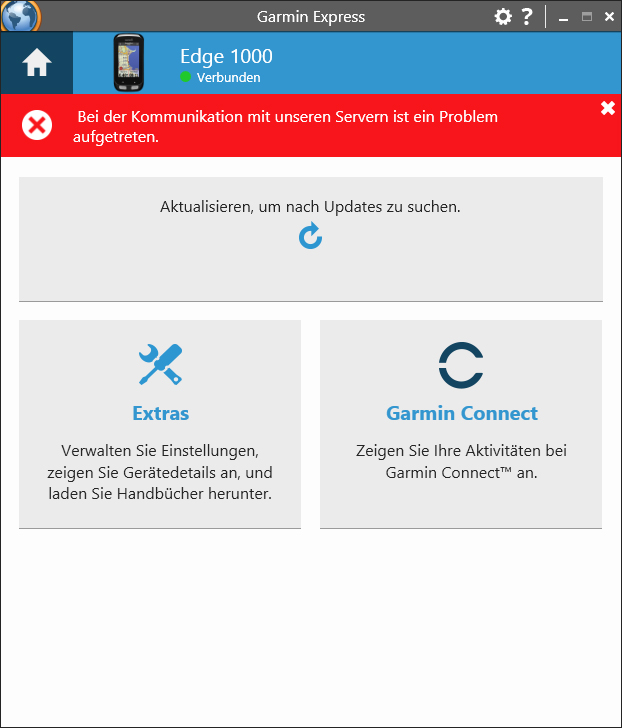

A little bit of thinking and investigation later, I found another place where my database update wasn’t properly optimised. Monitoring a large queue being processed showed clearly one aspect of the processing was quite the bottleneck. What the table does not show is that all these activities were sent in a very short amount of time, while the usual number comes as a trickle during the day…

The server was getting activities faster than it could process… Given the fairly low number of u sers of ConnectStats my server runs on a single computer on the cloud so has limited scaling ability… It works great with the regular trickle of activities from the app users, but here it was sending close to 5 days of activities all at once… You can see in the list the number of distinct active users sending an activity and the number of activities the server processes each day in the last few weeks…. When I saw activities coming again, I looked at my server to see that the queue processing the activities fed by the Garmin API was close to 10000 requests behind and growing. I never look too closely which one technically happened first overnight The day after It would be a race between Apple and Garmin: would Apple approve the new version before Garmin fixes the outage… Turns out a tough race to judge because on Monday morning when I woke up the app was approved and Garmin was sending activities again to my server. So I fixed both issues and rushed a new release on Sunday.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed